NEW YORK (VINnews/Rabbi Yair Hoffman – A friend in Yerushalayim was one of the people that discovered the real reason why the Volozhin Yeshiva closed down. He did so by thoroughly going through the Russian archives during the period of Tsarist rule. He discovered that it could be traced to tension between supporters of the Netziv zatzal and those of Rav Chaim Soloveitchik zatzal.

Much of the Torah world, nowadays, has adopted the remarkable insights and methodology of Rav Chaim in how they understand and dissect Torah concepts.

It may, however, also pay for the world to begin to heed the prescience of the Netziv – when it comes to his remarkable insights about dangers that we face in the world.

It seems that Sam Altman may have stumbled onto something. But we are getting ahead of ourselves.

Let’s begin with how the Torah describes the generation of the Tower of Babel:

“And the entire earth had one language and unified words… and they said: Come, let us build ourselves a city and a tower whose top reaches the heavens, and let us make a name for ourselves.” (Bereishis 11:1,4)

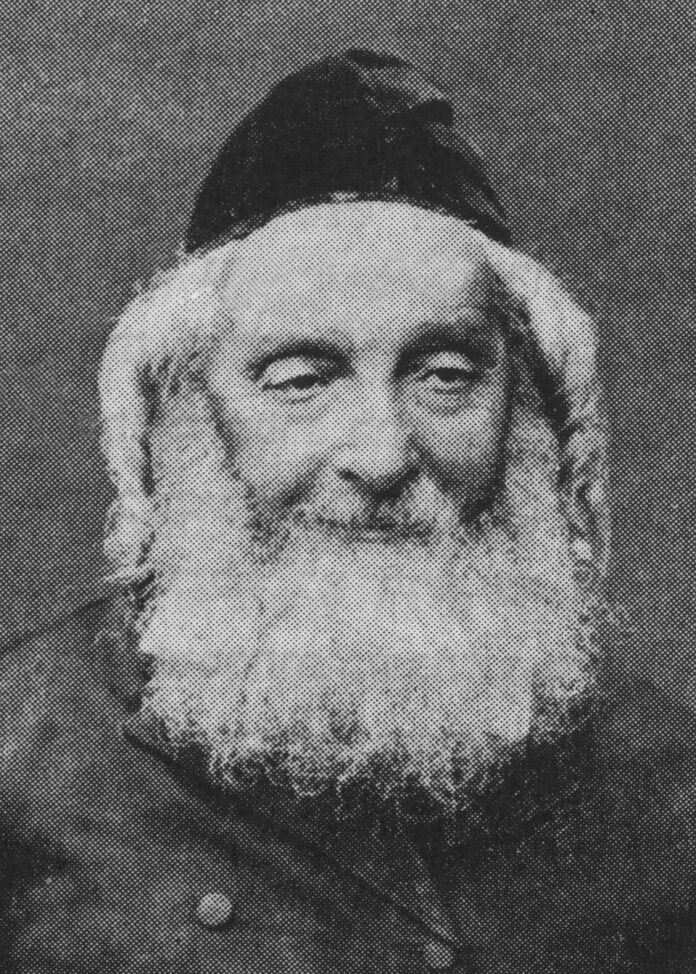

The Netziv — Rav Naftali Tzvi Yehuda Berlin, Rosh Yeshiva of Volozhin and one of the greatest Torah authorities of the 19th century — in his Ha’amek Davar on this passage makes a striking observation. He explains that the sin of the generation of Babel was not the building itself, nor even the ambition. The sin was the totalitarian impulse behind it — the desire to use their shared technological and organizational power to centralize control, eliminate individuality, and ensure that no one could opt out or think differently. The Netziv notes that Hashem’s response — scattering them and diversifying their languages — was not merely a punishment but a correction: restoring the pluralism and diversity of human society that the builders had sought to destroy.

The Netziv writes (Ha’amek Davar, Bereishis 11:4):

“שלא יתפזרו על פני כל הארץ, רצו שיהיו כולם תחת ממשלה אחת ומחשבה אחת”

“So that they would not scatter across the face of the earth — they wanted everyone to be under one authority and one way of thinking.”

He explains that this concentration of power and thought — using the greatest technology of their age to enforce uniformity — was the core of their sin. Hashem responded by building diversity back into human civilization.

The parallel to artificial intelligence concentrated in the hands of a small number of companies, governments, or individuals — capable of shaping what billions of people see, think, and do — is a precise structural echo of what the Netziv identified as the danger of Babel.

With this Torah foundation in place — the danger of using great technological power to concentrate control rather than spread blessing — we can now turn to what OpenAI itself is warning the world about.

THE WARNING

OpenAI — the company that makes ChatGPT — just released a 13-page report saying that artificial intelligence – in its latest and newest wave – is about to change the world more dramatically than electricity, the car, or the internet ever did. They’re calling the next stage “superintelligence” — AI systems capable of outperforming the smartest humans even when they are assisted by AI.

They want to start a national conversation now, before things get out of hand, about how to make sure this technology helps everyone — not just billionaires and big companies.

The Two Big Goals

The document is organized around two main ideas:

- Building an Open Economy — meaning: make sure regular people get a piece of the pie.

- Building a Resilient Society — meaning: make sure things don’t go terribly wrong.

The Dangers They’re Warning About

This is where the Netziv’s warning from Babel becomes very relevant. But before listing the dangers, there is a Midrash that speaks directly to how we should respond to danger — and it comes from Yaakov Avinu.

Yaakov Avinu’s Three-Part Strategy: A Model for Facing Existential Threats

When Yaakov learned that his brother Eisav was approaching with four hundred armed men, the Torah tells us:

“And Yaakov was very afraid and distressed, and he divided the people who were with him… into two camps.” (Bereishis 32:8)

The Midrash (Bereishis Rabbah 76:3) teaches that Yaakov prepared for the confrontation with Eisav in three ways: tefillah (prayer), doron (gifts and diplomacy), and milchamah (preparing for battle).

Rashi on this passage codifies this as a permanent lesson — that when facing a serious threat, a person must simultaneously pray to Hashem, attempt to make peace and find common ground, and take practical defensive measures. Relying on only one of these is insufficient.

All three must work together.

This is precisely the framework that responsible AI governance demands. Prayer and moral grounding (tefillah) — remembering that human beings are not the ultimate authors of history and that technology must be guided by true Torah values beyond profit. Diplomacy and cooperation (doron) — the international cooperation, information sharing, and public-private collaboration that OpenAI’s report is calling for. And practical defensive preparation (milchamah) — the auditing systems, safety regulations, containment playbooks, and guardrails that must be built now, before the threat fully arrives.

Yaakov did not wait until Eisav was at the door. He prepared in advance, on multiple fronts simultaneously. That is the model OpenAI is — perhaps without knowing it — advocating for.

Here are the specific dangers the document identifies:

Jobs disappearing. Frontier systems have advanced from supporting tasks that take people minutes to complete, to tasks that take them hours to complete. If progress continues, we can expect systems to be capable of carrying out projects that currently take people months. That means accountants, paralegals, writers, coders, and many others could find their jobs automated away — fast.

Wealth concentrating in a few hands. Without thoughtful policies, AI could widen inequality by compounding advantages for those already positioned to capture the upside while communities that begin with fewer resources fall further behind, excluded from new tools, new industries, and new opportunities. This is precisely the Netziv’s warning from Babel brought to life.

Misuse by bad actors. Some systems may be misused for cyber or biological harm. Imagine a terrorist using AI to design a new disease, or a hostile government using AI to hack critical infrastructure. These are concerns the document raises directly.

AI acting against human wishes. AI systems may act in ways that are misaligned with human intent or operate beyond meaningful human oversight. In plain English: we may build something so smart that we can no longer control it.

Governments using AI to crush freedom. There is a real risk of governments or institutions deploying AI in ways that undermine values. A government with AI surveillance tools could monitor every citizen, suppress dissent, and eliminate opposition — faster and more thoroughly than any dictator in history ever could.

Harm to young people. AI could create new pressures on social and emotional well-being, including for young people, if deployed without adequate safeguards.

What They’re Proposing — Yaakov’s Three Paths in Practice

Corresponding to Yaakov’s three-part strategy, Altman’s proposals fall naturally into three categories:

Tefillah — connecting to a Hashem and the morality of a Higher Power. OpenAI calls for creating structured ways for public input so that alignment isn’t defined only by engineers or executives behind closed doors. Someone has to ask the deeper question: what kind of world are we trying to build? That is ultimately a moral and spiritual question, not a technical one.

Doron — diplomacy and shared prosperity. OpenAI proposes creating a Public Wealth Fund that provides every citizen — including those not invested in financial markets — with a stake in AI-driven economic growth. This in itself may be dangerous too – a form of socialism or communism that must be carefully watched. They also call for treating access to AI as foundational for participation in the modern economy, similar to mass efforts to increase global literacy, or to make sure that electricity and the internet reach remote parts of the globe. Again – this too can be quite dangerous.

Milchamah — practical defense and preparation. Altman’s document calls for developing and testing coordinated playbooks to contain dangerous AI systems once they have been released into the world, as well as strengthening auditing institutions and establishing international information-sharing frameworks so that dangerous capabilities can be identified and contained before they cause irreversible harm.

The Pasuk in Yechezkel

There is one final idea that may speak to the topic. In Yechezkel 33:6, Hashem tells the Navi:

“But if the watchman sees the sword coming and does not blow the shofar, and the people are not warned, and the sword comes and takes a life — he is taken for his iniquity.” (Yechezkel 33:6)

The Radak in his commentary explains that the tzofeh — the watchman — is not just a military role – it is a moral role. Anyone who has the ability to see danger coming and the platform to warn others carries an obligation to speak up. Silence in the face of foreseeable harm is itself a form of responsibility.

The Netziv’s warning from Babel, Yaakov’s three-part strategy before Eisav, and the RaDaK’s teich of the pasuk in Yechezkel all converge on a single thought: great power demands great preparation, great moral seriousness, and great humility. Hashem built diversity and pluralism into human civilization for a reason. Any technology — however brilliant — that threatens to undo that diversity and concentrate power in the hands of a few is walking down the road of Babel. The question for our generation is whether we will learn that lesson — or repeat it.

The author can be reached at [email protected]